The Real Product Management Work Isn’t the Spreadsheet

How I used AI to facilitate an RFP evaluation for my nonprofit board, and what I learned about the gaps nobody sees coming.

I serve on the board of a nonprofit that provides outdoor STEM education to local schools. We needed to select an architectural firm to create a site master plan for our 311-acre campus expansion.

The subcommittee drafted an RFP. Firms responded. Now we had to evaluate them.

This should have been straightforward. It wasn’t.

What started as “build an evaluation form” turned into a four-month lesson in something I thought I already understood: the real PM work isn’t the deliverable. It’s noticing when a group isn’t aligned on what they’re deciding, and building the artifacts to create that shared picture.

AI helped at every stage. But I had to notice the gap. AI didn’t do that.

Phase 1: Building the Evaluation System

The first task seemed simple. Create a way for board members to evaluate RFP responses consistently.

I broke it into pieces:

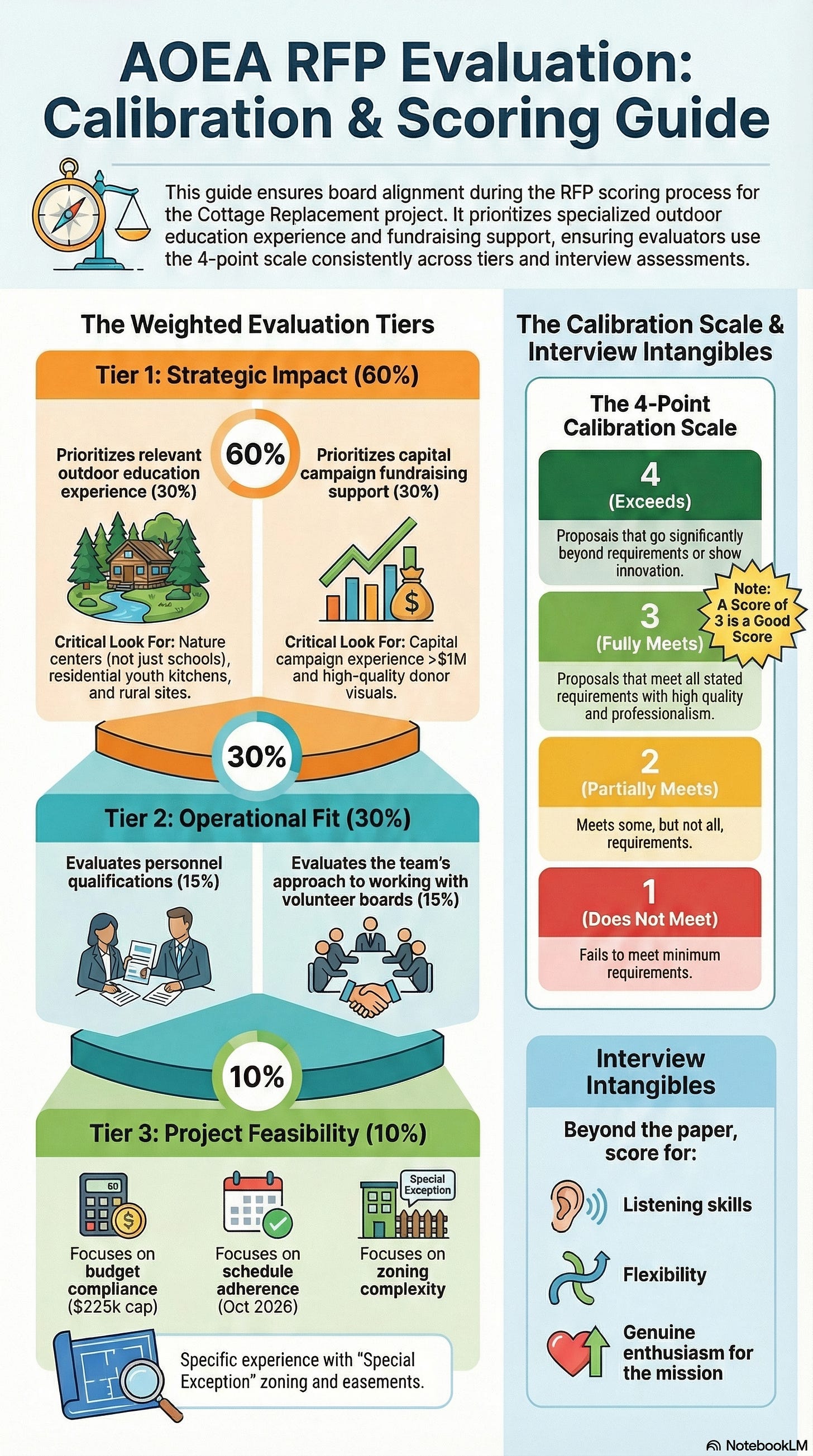

Extract the evaluation criteria from the RFP

Create a scoring guide explaining how to evaluate and at what levels

Build an evaluation form for each board member

Connect the forms to a sheet that aggregates scores automatically

Two days of work compressed into 2-3 hours.

But the time savings wasn’t the point. AI handled the drudgery. Reading through documents. Building forms. Writing formulas. Formatting cells.

That freed me to think about what matters.

Yes, budget and schedule are standard criteria. Every RFP evaluation includes them. But we’re an organization striving to provide quality outdoor STEM education to local schools. Generic criteria won’t cut it.

I needed to make sure we’re measuring whether responding firms get our mission. Have they done this work before? Do they understand outdoor education? Can they push us forward, or will they deliver the minimum?

Those questions required my brain. Weighting them appropriately required judgment. Framing them for board members who care deeply about this mission required thought.

AI didn’t answer those questions. AI gave me the time and space to answer them well.

Why Individual Scoring Before Group Discussion

I designed the process so each board member completes their evaluation independently before we meet to discuss.

Someone might push back: “Individual scoring before discussion creates anchoring bias. People see the numbers and stop thinking.”

Here’s why I disagree.

Without structure, board meetings favor the loudest voices. The most confident speakers. The people who think quickly on their feet. That’s not the same as the best thinking.

Individual scoring forces each person to engage with the material beforehand. It creates accountability. You can’t hide in a group discussion if you’ve already committed your thinking to paper.

This matters for introverts especially.

The preliminary phase isn’t limiting. It’s liberating. Introverts have already processed. They’ve already formed views. When the meeting starts, they’re not formulating thoughts in real-time while extroverts dominate airtime. They’re ready.

I’ve seen too many board discussions where three people talk and five people nod. This process design aims to change that ratio.

The Miss I’ll Own

Here’s something I should have done differently.

I shared the weighting and scoring criteria with the board president, but not with the rest of the subcommittee. I should have invited more input on how we weighted mission alignment versus budget versus experience.

I don’t think the outcome would have changed. But the process would have built more buy-in. When people contribute to how decisions get made, they trust the results more.

This is a miss I’ll remember for next time.

Phase 2: From Evaluation to Finalists

The evaluations did their job. We identified finalists.

More importantly, the structured scoring helped frame WHY they were the finalists. The criteria made our reasoning visible. When we discussed, we weren’t starting from “I liked Firm A better.” We were starting from “Firm A scored higher on mission alignment because of X, Y, and Z.”

That’s the value of structure. It translates gut feelings into a shared language.

We moved to interviews. Then reference checks. Then follow-up questions.

Each stage generated more material. RFP responses. Evaluation scores. Interview notes. Interview scoring. Reference feedback. Written answers to clarifying questions.

I needed to synthesize all of it into something the full board could use to make a decision.

Phase 3: The Gap Nobody Announced

Here’s where things got interesting.

As I prepared materials for the full board, I realized something important. We weren’t all aligned on what a “site master plan” actually produces and how it connects to physical outcomes.

We knew we needed one. We wrote the RFP asking for one. But when I thought about what the full board would need to make a confident decision, I realized we hadn’t created a shared picture of what success looks like.

This is natural. An RFP uses professional language. It sounds complete. The work moves forward because everyone trusts the process. But “trusting the process” isn’t the same as “seeing the same picture.”

We were about to ask the board to select a firm to produce deliverables that meant different things to different people. That’s not a recipe for confident decisions. Or for a smooth project once work begins.

Filling the Gap: Sometimes You Need a Human Expert

Once I noticed the alignment gap, I had to fill it. AI could help me build artifacts, but it couldn’t tell me what questions a board should ask when evaluating architectural firms for a site master plan.

I reached out to two college roommates. Both are architects.

I asked a specific question: Some firms have civil engineering integrated from day one, others wait until after initial site assessment to define the civil scope. Is there a standard approach for this type of project?

One friend walked me through the nuance. His advice: you want civil engineering identified at the beginning, but not necessarily fully engaged. Have them available to vet civil considerations as the design team pursues options, but don’t burn their time too early. Keep the early conversations focused on educational objectives and site opportunities before diving into cubic yards of dirt to move.

The other friend added a different angle. There are hidden costs below the site you want to understand early. Stormwater mitigation requirements. Utility connections. Possible ecological issues like endangered species or protected habitats. Archaeological considerations. These aren’t things the architects always know. You need the civil engineer and possibly an ecology consultant to surface them.

Both conversations took about 30 minutes combined. They shaped everything I built afterward. The pre-read document. The visuals. The way I framed what a site master plan produces and why we need civil engineering involved early.

AI is good at synthesizing information you already have. It’s less good at telling you what you’re missing. For that, I needed people with domain expertise who could point out the gaps I didn’t know existed.

Phase 4: Building the Communication Artifacts

I needed to close this gap before the board meeting. That meant creating materials that answer two questions:

What does “finished” look like?

How do we get from here to there?

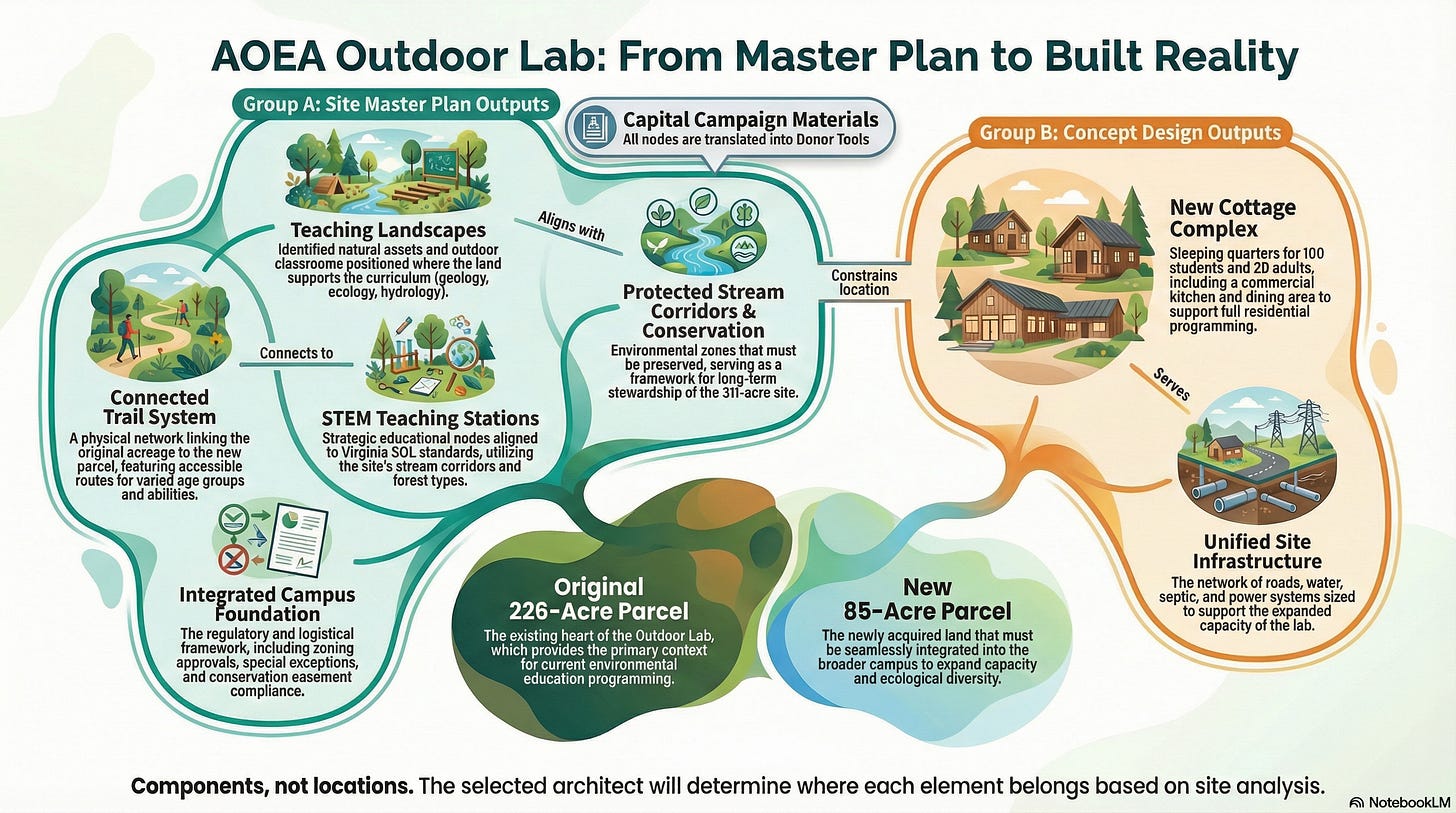

For the first question, I created a visual showing all the components of the completed campus. New cottage complex. Connected trail system. Teaching landscapes. STEM teaching stations. Integrated infrastructure. Conservation protections.

The visual shows how everything connects. It works backwards from the end state so board members can picture what success looks like.

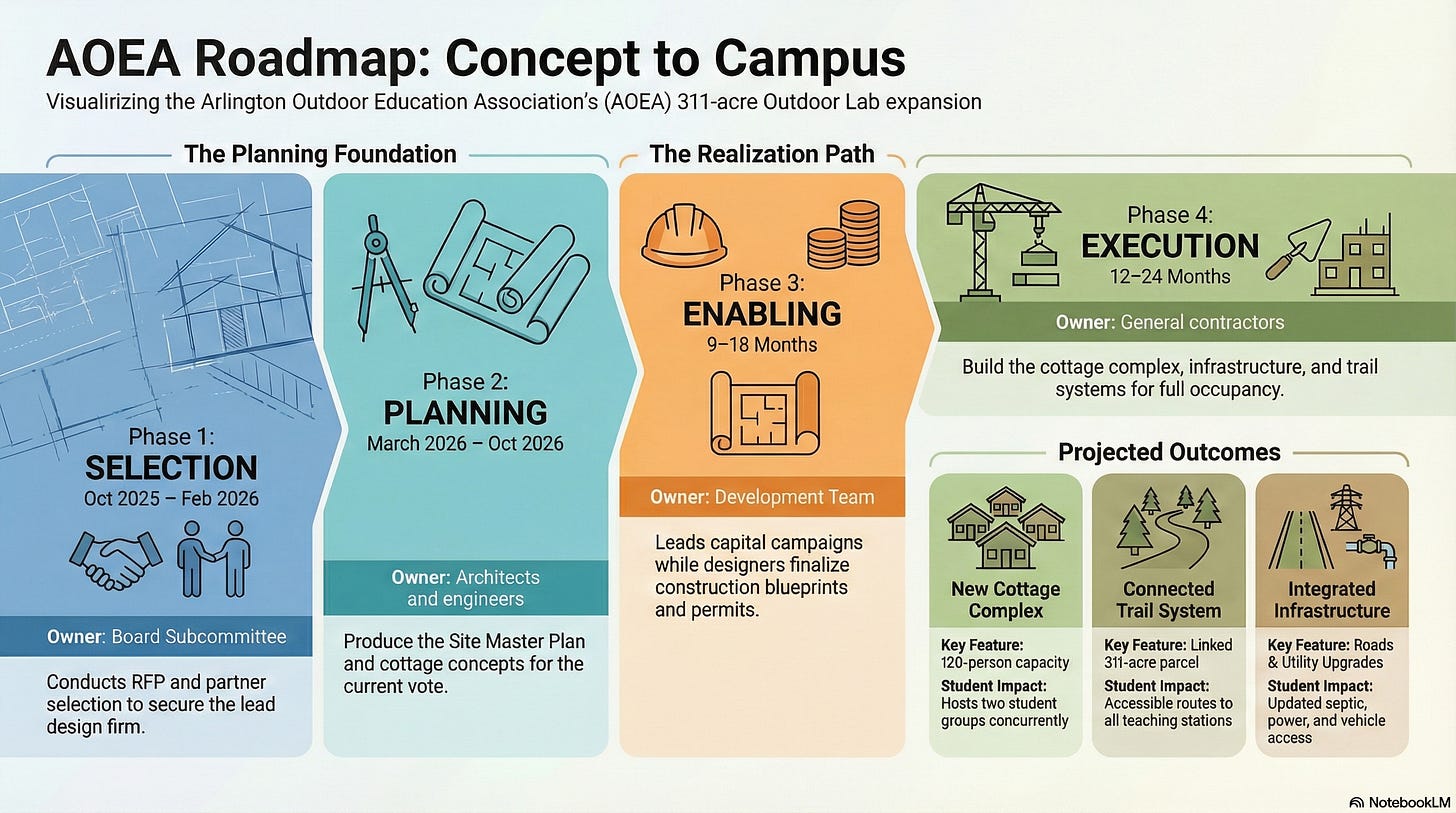

For the second question, I created a roadmap showing every phase from now to finished. Phase 1: Selection (where we are now). Phase 2: Planning (what this RFP produces). Phase 3: Enabling (capital campaign and construction documents). Phase 4: Execution (actual building).

Each phase shows who owns it, what it produces, and how long it takes.

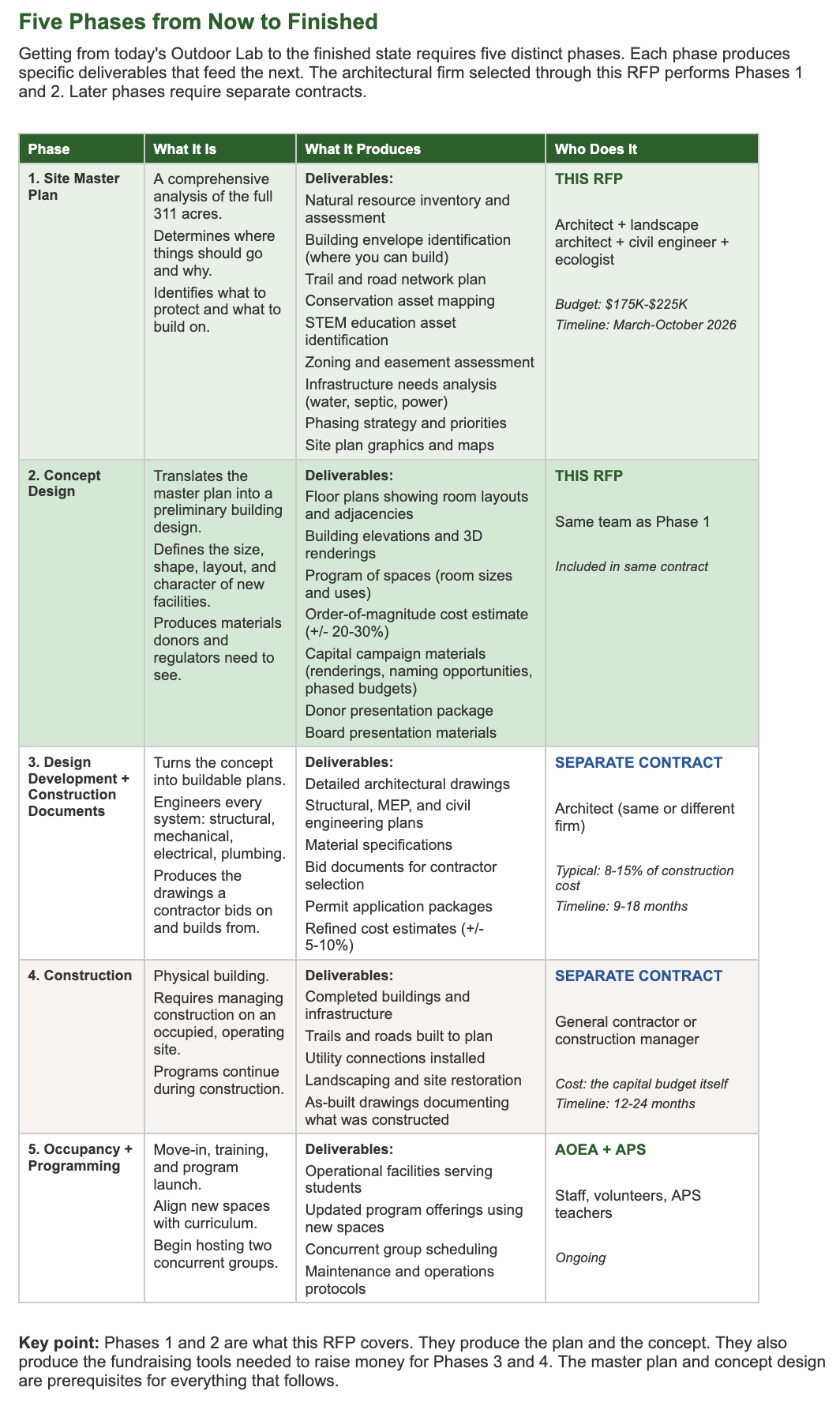

I also wrote a pre-read document that explains what a site master plan produces and why it matters. It maps each deliverable from the current RFP to the physical outcome it enables.

The document starts with what “finished” looks like, then works backwards through the phases, then connects specific line items in the RFP to the outcomes they make possible.

The Tool Stack

Different tools for different jobs.

I think of AI tools as different screwdrivers. Phillips head and flat head. Both screwdrivers. Different applications.

Claude excels at synthesis and thinking. I used it as a partner to extract criteria from the RFP, create the scoring companion guide, and reason through what we should evaluate and why.

Gemini excels at wiring things together. It helped me build the Google Form, connect it to the response sheet, and write the formulas that aggregate scores. Gemini was fantastic with spreadsheet formulas.

NotebookLM excels at synthesis across many documents. When I needed to create the pre-read and presentation from the full body of material (RFP, responses, evaluations, interviews, references, follow-up answers), NotebookLM handled the context that exceeded what other tools could manage.

Claude, I love working with you. But your context window made some tasks challenging. NotebookLM filled that gap.

Each tool has strengths. Knowing when to switch matters.

What I Learned

The real PM work isn’t the spreadsheet. Or the form. Or the scoring guide.

The real PM work is noticing when the group isn’t aligned on what they’re deciding, and building the communication artifacts to create that alignment.

AI removed friction at every stage. It handled criteria extraction, form building, formula writing, document synthesis, and visual creation. That work would have taken days. It took hours.

But AI didn’t notice that we weren’t all seeing the same picture of what a site master plan produces. I noticed that when I started preparing materials for the full board. AI didn’t notice that we needed to show the full journey from selection to execution. I noticed that when I imagined sitting in their seats.

The gap-noticing is the PM work. The artifact-building is the execution. AI helps with execution. The noticing still requires a human paying attention.

What You Can Take From This

If you serve on a board, lead a small team, or facilitate group decisions, here are the patterns worth remembering:

Individual evaluation before group discussion creates more inclusive participation. It forces preparation and gives introverts equal footing.

Structured scoring translates gut feelings into shared language. It makes reasoning visible and discussable.

Communication gaps don’t announce themselves. You find them by accident and fix them on purpose. A group can move forward on something without sharing the same mental picture of what it produces.

Working backwards from “finished” clarifies everything. Start with what success looks like. Then map the path to get there.

Different tools for different jobs. Know when to switch.

And finally: get input on process design early. The outcome might not change, but the buy-in will.

What’s Next

The board meeting is coming. I’ll learn what worked and what needs adjustment.

I’ll share what happens.

If you’ve used PM skills outside your day job, in a nonprofit, a community group, or a side project, I’d like to hear about it. Reply and tell me what you’re working on.

I really enjoyed reading this. Wish this had been available when I was planning and coordinating statewide conferences and working with national associations.